The robot does not know that the world in the simulator was kind to it.

In the virtual room, the floor had perfect friction. The box edges were crisp. The lighting never flickered. The camera had no dust on the lens. The motor commands arrived exactly when expected. The object model had the right mass, the right shape, and no hidden dent from being dropped last week. A simulated gripper can practice a million grasps without wearing its pads down, overheating a motor, knocking over a table, or annoying a technician who has to reset the scene.

That is the seduction of simulation. A robot can practice faster than real time, cheaper than real hardware, and with less risk. It can fall without breaking. It can crash into virtual shelving without injuring anyone. It can repeat the same task until a learning algorithm finds patterns that would be too expensive to discover entirely on a lab floor. For robot learning, simulation is not a toy. It is one of the few ways to gather enough varied experience to train systems that must act in physical space.

The trouble begins when the trained behavior meets the real room.

The Gap Is Small Until It Matters

Sim-to-real is the name for the transfer from simulation to real hardware. The phrase sounds technical, but the basic problem is familiar. Practicing a piano piece on a silent keyboard teaches finger order, but it does not teach touch, sound, pedal feel, room acoustics, or the tiny pressure changes that make the music alive. A flight simulator can train a pilot, but nobody pretends that weather, maintenance, fatigue, cockpit vibration, and judgment disappear because the screen was accurate.

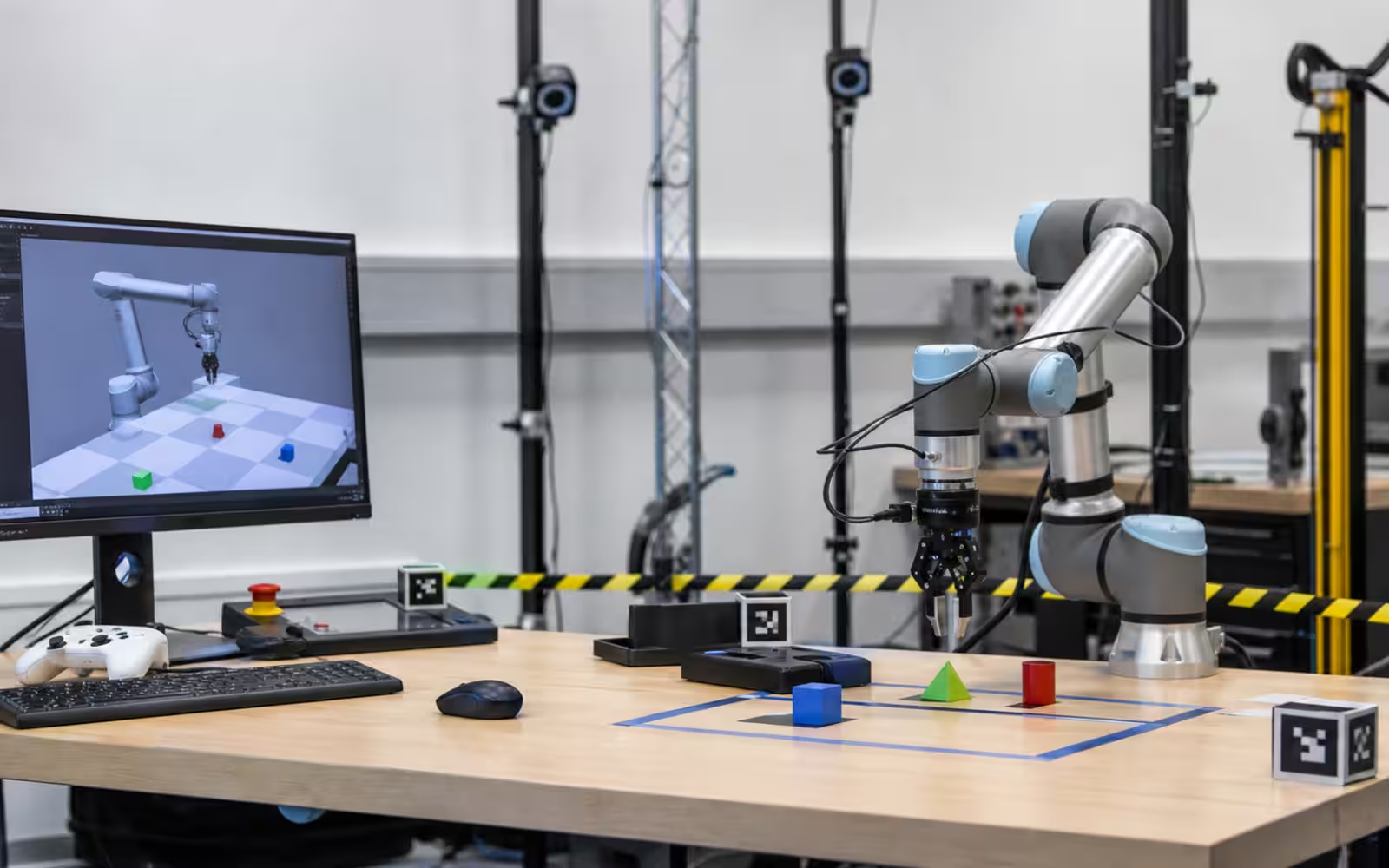

Robots face the same translation problem. A virtual robot may learn that a cup can be picked from the side, but the real cup may be slightly sticky, reflective, flexible, wet, chipped, or heavier than its mesh model suggested. The simulated camera may see clean object boundaries while the real camera sees glare, shadow, motion blur, and clutter. The planner may assume that the robot’s joints hit commanded positions exactly, while the real arm has backlash, compliance, cable drag, calibration error, or a worn bearing.

Most of these differences are boring in isolation. Together they decide whether the robot works. A one-centimeter perception error, a half-second delay, and a slightly lower friction coefficient can turn a clean virtual success into a dropped object. In software, a small mismatch may be a bug. In robotics, a small mismatch may be gravity doing the final code review.

Why Simulation Is Still Worth It

If simulation is imperfect, the tempting reaction is to dismiss it. That would be a mistake. Real-world robot data is slow and expensive. A robot arm can only move so fast. A mobile robot can only drive through so many rooms in a day. A humanoid can only fall so many times before someone has to inspect the hardware. A warehouse pilot can only disrupt operations so much before the customer loses patience.

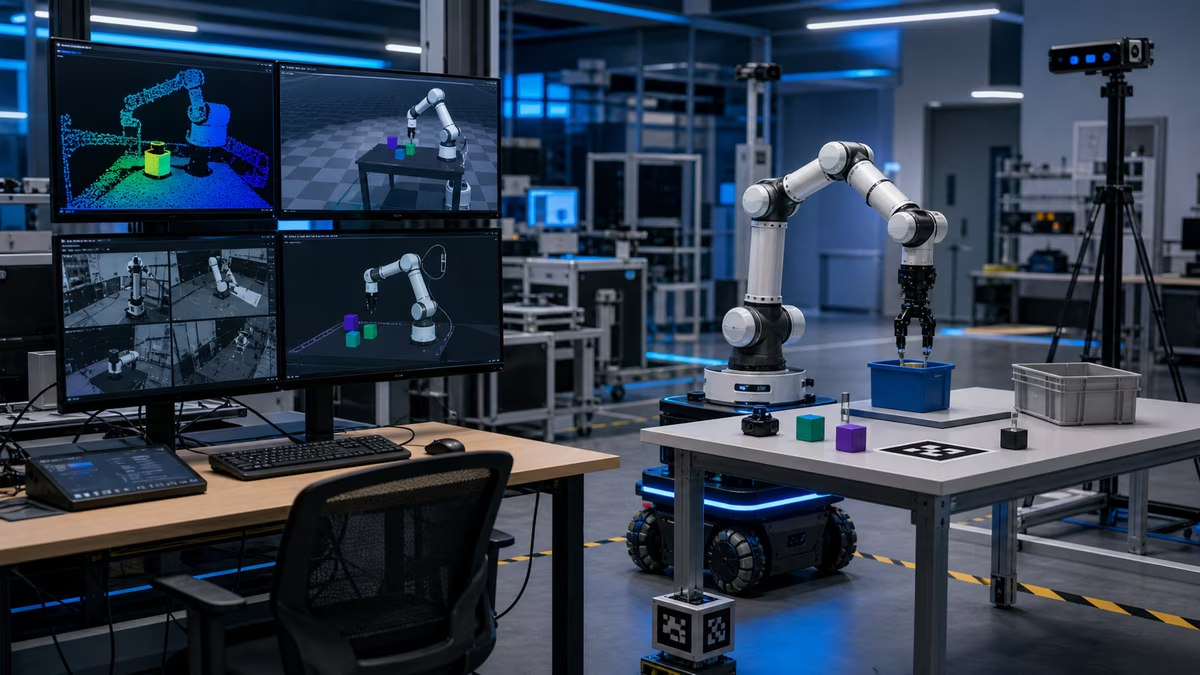

Simulation lets teams explore the space before they spend physical time. It is especially useful for tasks where the broad structure matters more than every texture detail. Navigation, manipulation planning, collision checking, reinforcement learning, grasp sampling, fleet behavior, warehouse layouts, and safety envelopes can all benefit from simulated trials. A team can test thousands of shelf arrangements, object positions, lighting changes, or traffic patterns before choosing what to run on real machines.

Simulation also makes rare events less rare. A delivery robot may only occasionally meet a blocked hallway, a slippery ramp, a pet, a crowded elevator, or a bad wireless zone. If those events matter, the team can create them deliberately in a virtual world and see whether the policy has any sensible response. The simulator becomes a rehearsal space for trouble.

The value is not that the simulator replaces the world. The value is that it helps the team arrive at the world with better questions.

Randomness as Training

One common bridge across the gap is domain randomization. Instead of trying to make the simulator perfectly match one real environment, engineers deliberately vary many properties during training. The floor gets more or less slippery. Objects change shape, color, weight, and position. Lighting moves. Camera noise changes. Joint friction shifts. Textures become messy. The robot is forced to succeed across a family of worlds rather than overfit to one clean model.

The idea is practical. If the simulated training world is too exact, the robot may learn brittle tricks. It may depend on a shadow, a fixed camera angle, a perfect object placement, or a friction value that never appears in real life. Randomization makes those shortcuts less reliable. The robot has to learn behavior that survives variety.

But randomization is not magic. If the random range misses the real problem, the policy can still fail. If the virtual objects vary in color but never in deformability, the robot may still struggle with cloth, bags, cables, or food. If the simulated camera adds noise but not realistic glare, a shiny metal part can still break perception. The skill is choosing what to vary and how much. Too little variation produces fragile policies. Too much variation can make learning slow or teach the robot to be overly cautious.

Good sim-to-real work therefore looks less like pushing a button and more like investigative craft. The team watches real failures, asks what physical mismatch caused them, adjusts the simulator, retrains or fine-tunes, and tests again. The simulator improves because the real robot embarrasses it.

Digital Twins Are Not Twins Unless They Age

The phrase digital twin often appears in robotics and industrial automation. At its best, it means a maintained virtual representation of a real asset or environment. A factory line, warehouse, robot cell, or energy system can be modeled so teams can plan changes, test throughput, and understand failure modes.

The danger is that the digital twin becomes a digital photograph. It may represent the system as designed, not as operated. The real conveyor develops drift. The bins get replaced. Staff change how they stage materials. The lighting shifts after a maintenance crew swaps fixtures. A sensor gets nudged. The robot’s gripper pads wear down. A twin that does not update becomes a polite fiction.

For physical AI, the living connection matters. The best virtual models are fed by measurement. They use real logs, calibration checks, asset changes, and operator feedback. They become more useful because they are kept honest. The model is not only a place to dream up future behavior. It is a record of the present system, with all the compromises that present systems contain.

This is why sim-to-real is also an operations problem. The learning team, controls team, technicians, safety staff, and site operators all know pieces of the truth. A simulator that ignores the people who maintain the real machine will miss the facts that actually ruin deployments.

The Real Robot Is the Judge

A mature robotics program treats real testing as the judge, not as a publicity event. The lab run should include repeated trials, messy resets, environmental variation, and failure analysis. The team should care about how the robot fails, how quickly it recovers, what it damages, when it asks for help, and whether the same behavior still works after a long day.

The important measure is not whether simulation produced one impressive transfer. It is whether the loop becomes faster and more reliable. When a failure appears on hardware, can the team reproduce a version of it in simulation? Can they test alternate strategies virtually before risking more hardware time? Can they use simulation to narrow the search, then use real data to confirm that the fix survived contact with the world?

This loop is where simulation earns its place. It is not a substitute for reality. It is a disciplined way to spend reality more wisely.

What Beginners Should Watch For

When you hear that a robot was trained in simulation, ask what was transferred. Was it a grasping policy, a walking controller, a navigation planner, a perception model, a task strategy, or a whole system? Ask what real tests followed. Ask whether the environment was close to the simulator or deliberately different. Ask how many trials were run, what failure rate remained, and whether the system was fine-tuned with real data.

Also ask whether the use case tolerates imperfection. A simulated policy that helps a robot sort durable packages may be useful at a failure rate that would be unacceptable for handling glassware, medicine, or people. A warehouse robot can operate in a redesigned environment. A household robot has to survive rooms that were not built for it. The transfer problem depends on the tolerance of the job.

The honest story of sim-to-real is neither disappointment nor miracle. Simulation gives robots a place to practice impossible volumes of experience. Reality gives them friction, dust, surprise, wear, and consequence. The work is to make those two teachers argue productively until the robot learns behavior that survives both.

That is why virtual practice is powerful, and why it is never enough.