Robot videos often imply a clean story: the robot sees the world, decides what to do, and acts. Real deployments are usually messier and more interesting. Many useful robots are not fully independent actors. They are supervised systems with people nearby, people online, or people available when the robot reaches the edge of its competence. Teleoperation is the name for the human side of that story.

Teleoperation can mean direct remote driving, where a person controls motion in real time. It can mean shared control, where the robot handles low-level movement while a person gives goals or corrections. It can mean exception handling, where the robot works alone until it gets stuck and asks for help. It can also mean quiet monitoring, where a human watches several robots and intervenes only when the system flags risk.

The important point is that teleoperation is not automatically a failure. It is often the bridge between a research demo and a useful service. A robot that can do most of a task but needs human help at the hard moments may still be commercially valuable. A robot that pretends not to need help and fails dangerously is not.

Autonomy is not one switch

People talk about autonomy as if the robot is either autonomous or remote-controlled. In practice, autonomy is layered. A mobile robot may plan its route while a human approves unusual zones. A warehouse robot may navigate on its own but call for help when a pallet is crooked. A home robot may recognize a spill but ask before moving an unfamiliar object. A humanoid may perform a repeated motion from a learned policy while a remote operator supervises the setup.

This layered view matters because it changes how you judge capability. If a robot needs a person to guide every movement, it is closer to a machine with remote hands. If it needs a person only for rare exceptions, it may already be a strong automation tool. The difference is not philosophical. It shows up in staffing, latency tolerance, training, safety, cost, and customer expectations.

A good robotics company should be able to explain where the human fits. Does the operator drive continuously? Does the operator approve plans? Does the operator label confusing objects? Does the operator recover failures? Does one operator supervise one robot, five robots, or fifty? The answer tells you more than a highlight video.

Latency changes the job

Remote control feels simple until delay enters. A person driving a robot through a camera feed needs timely feedback. Even a small delay can make precise motion harder. A larger delay can make direct control unsafe or impossible. This is why remote operation of robots is not the same as playing a video game with a bad connection. The robot has mass, momentum, blind spots, tools, batteries, and sometimes people nearby.

When latency is low, an operator can steer more directly. When latency is higher, the robot needs more local autonomy. Instead of telling the robot every tiny motion, the person may choose a target, approve a grasp, or select a recovery behavior. The robot handles the details because it can react faster to immediate sensor data.

This is one reason teleoperation design reveals the maturity of the system. A fragile robot asks the human to compensate for everything. A stronger robot gives the human meaningful control without requiring impossible reflexes. The interface should show what the robot knows, what it is uncertain about, what action it is about to take, and how to stop it.

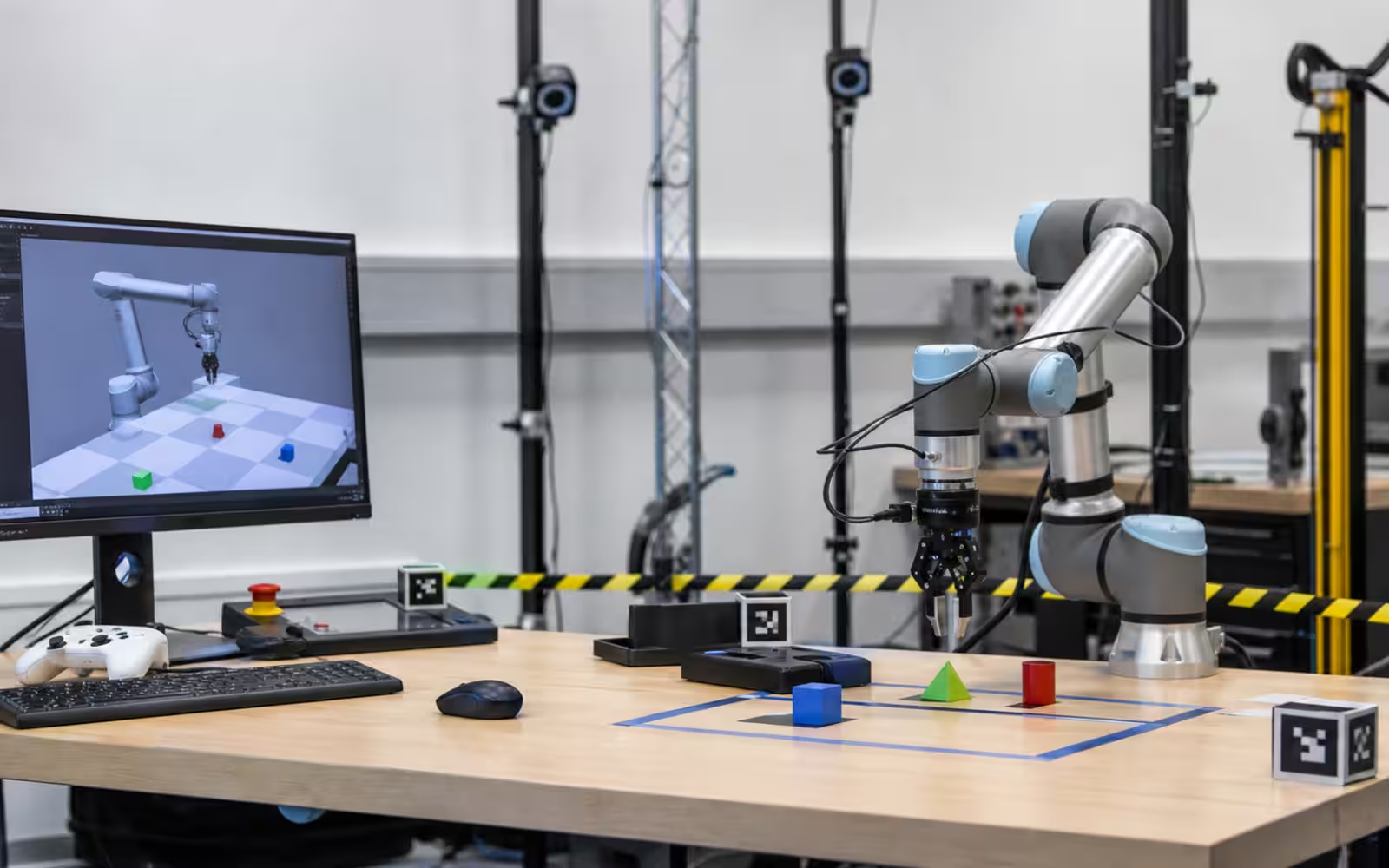

The interface is part of the robot

The teleoperation station is not an accessory. It is part of the product. Camera placement, depth cues, map views, warning states, control modes, logs, and emergency stops all shape what the human can safely do. A bad interface can make a capable robot look clumsy. A good interface can make limited autonomy useful because the operator sees the right information at the right moment.

The best interfaces do not bury the operator in every sensor stream. They organize attention. A navigation view helps the person understand where the robot is. A camera view helps with local judgment. A status panel shows battery, connectivity, payload, mode, and warnings. A clear control state shows whether the robot is acting, waiting, paused, or requesting help. The emergency stop has to be obvious, physical or virtual, and trusted.

There is also a training burden. Operators need to understand what the robot can and cannot see, how it fails, when to intervene, and when intervention might make things worse. A person who overcorrects constantly can prevent the robot from completing tasks. A person who trusts the system too much can miss early signs of trouble. Supervision is a skill.

Teleoperation can hide or reveal truth

There is a bad version of teleoperation: using hidden human control to make a robot seem more autonomous than it is. That can fool investors, customers, or the public for a while, but it creates a trust problem. If people think the robot is making decisions independently, they will judge the technology differently than if they know it is assisted.

There is also an honest version: showing teleoperation as part of the deployment model. This can be perfectly respectable. Elevators had operators before they became ordinary automatic infrastructure. Aircraft use autopilot, pilots, air traffic control, checklists, and maintenance systems together. Many automation systems become useful before they become invisible.

The honest question is not “Is there a human?” The honest question is “What work is the human doing, how often, at what cost, and with what safety responsibility?” If the human is handling every hard case, the robot may not yet scale. If the human is supervising exceptions that become rarer over time, the system may be learning its way toward stronger autonomy.

Safety needs a fallback state

Teleoperation is often part of safety because it gives the robot a way to ask for help. But human help is not enough by itself. The robot needs a safe fallback when the connection drops, the operator is busy, the camera view is blocked, or the situation becomes unclear. Stopping safely is a capability. So is backing out, lowering a payload, releasing force, or moving to a known waiting area.

This is especially important around people. A remote operator may not see everything happening near the robot. A camera angle can hide a foot, a pet, a child, a dangling cable, or a reflective surface. Local safety systems still matter: speed limits, force limits, bump sensors, geofences, warning sounds, lights, and conservative motion near humans. Teleoperation should add a layer of judgment, not replace basic safety design.

The future is supervised autonomy

For many real tasks, the next useful step is not a robot that never needs people. It is a robot that uses people well. One operator may supervise a small fleet. The robots may complete routine work autonomously and escalate edge cases. The operator may teach corrections that improve the system. The deployment may begin with heavy supervision and gradually reduce interventions as the environment, training data, and autonomy improve.

That is a more grounded future than the fantasy of instant general-purpose robots. It also makes adoption easier to evaluate. Look at the intervention rate. Look at the operator-to-robot ratio. Look at how long recovery takes. Look at whether the same failures repeat. Look at whether the interface gives operators real situational awareness. Look at whether customers understand the human role.

Teleoperation is not the opposite of robotics progress. It is one of the ways robotics progress reaches the world before autonomy is perfect. The best systems are honest about that. They do not erase the human. They put the human where judgment matters most, then design the robot so that help becomes less frequent, safer, and more useful over time.