The first mistake people make with robot demos is treating the video as the evidence.

A video can be useful. It can show the shape of the machine, the apparent smoothness of motion, the kinds of objects involved, the speed of a task, and the confidence of the team presenting it. But a video is not a deployment. It is a selected window into something that happened, or was made to look like it happened, under conditions the viewer may not understand.

Robots are physical systems. They do not only answer whether an algorithm can choose an action. They answer whether motors, batteries, sensors, software, grippers, floors, objects, lighting, people, maintenance routines, network conditions, and safety boundaries can cooperate long enough to do useful work. A demo can hide most of that. A deployment cannot.

The mature way to watch a robot demo is not cynicism. It is curiosity with a clipboard. You are not asking whether the team is lying. You are asking what the clip does and does not prove.

The missing denominator

Most demo videos show successes. They rarely show the denominator: how many attempts were made, how many failed, how often an operator intervened, and how long the system ran before the successful segment was recorded.

If a robot arm picks up a cup once, that is a data point. If it picks up one hundred cups of different shapes under different lighting conditions, with a clear failure rate and a safe recovery behavior, that is beginning to look like evidence. If a humanoid folds one shirt on a clean table, the result is interesting. If it handles a basket of mixed laundry in ordinary household lighting, notices when a sleeve is inside out, avoids dropping clothes on the floor, and knows when to ask for help, the claim is much stronger.

The denominator changes the meaning of the same clip. One successful pick after ninety failures is research progress. Ninety-nine successful picks out of one hundred in the actual work cell may be a product. Both can be valuable, but they are not the same thing.

This matters because robots often fail in ways that are visually boring. The gripper misses by a few millimeters. The planner times out. A reflective object confuses perception. The robot pauses too often. A person has to reset the scene. The battery drains before the task is economically useful. None of those failures makes a dramatic viral clip, but all of them matter more than the one moment that looked magical.

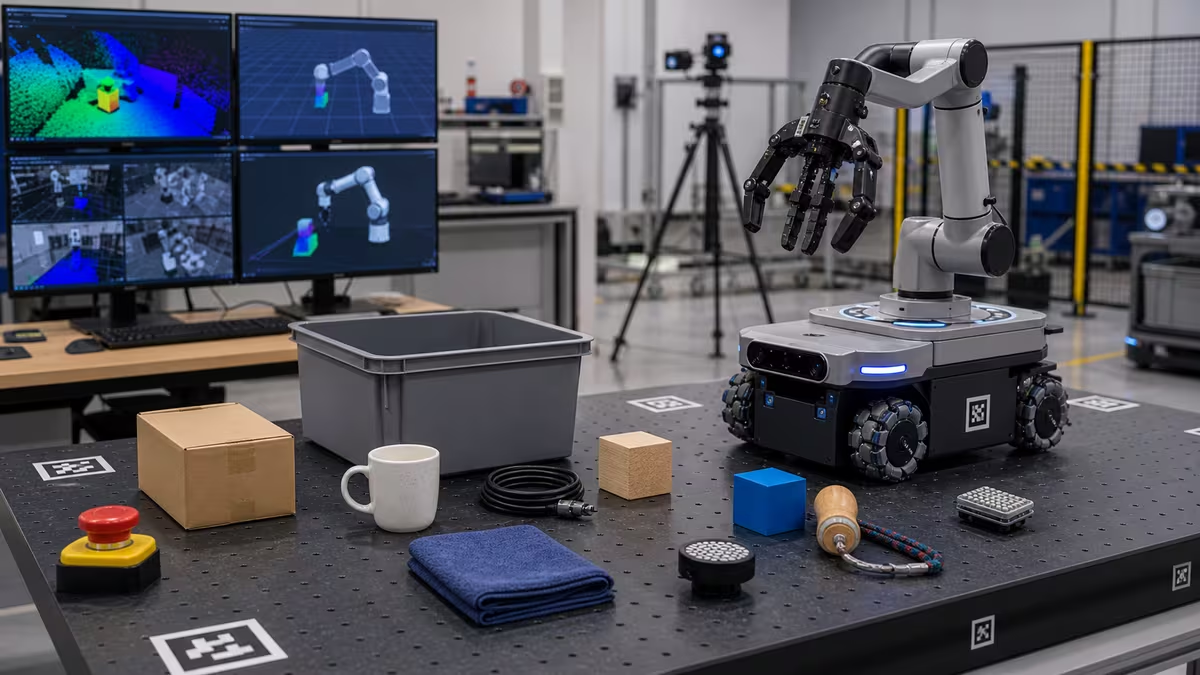

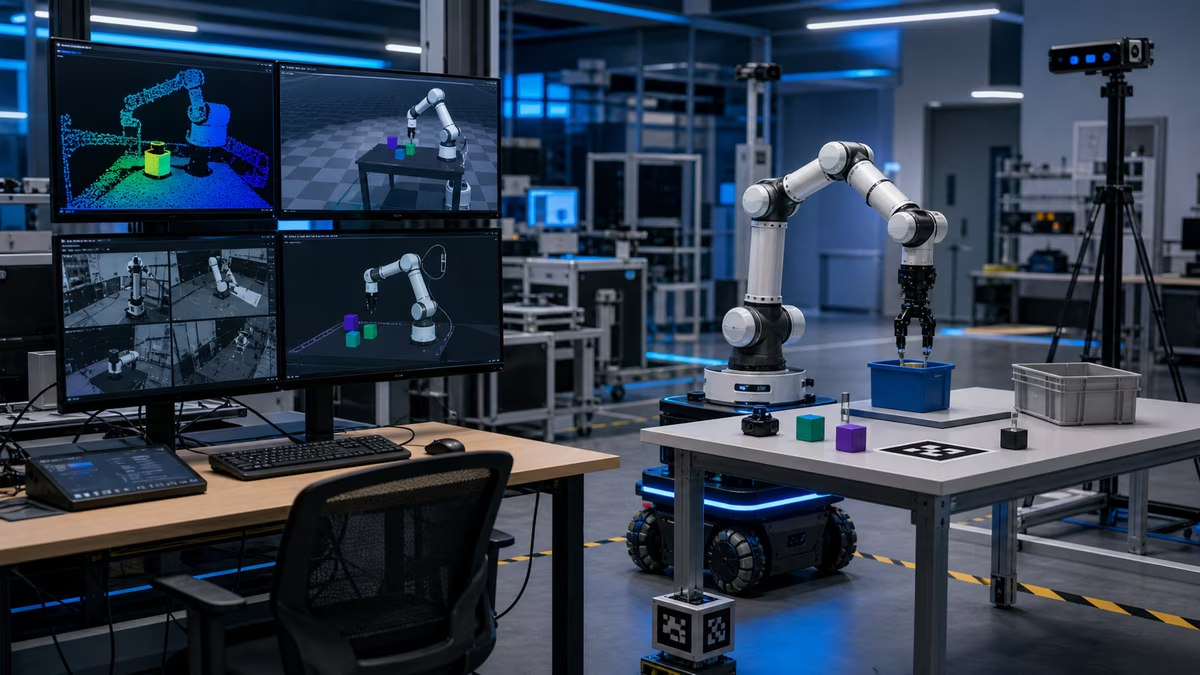

The environment is part of the robot

A robot demo is never just a robot demo. It is a robot plus an environment.

Look at the floor. Is it flat, clean, and marked? Look at the lighting. Is it controlled? Look at the objects. Are they known in advance, placed neatly, or unusually friendly to the gripper? Look at the people. Are they trained operators who understand the robot’s blind spots? Look at the room. Is it a lab, a mock apartment, a warehouse aisle, a trade-show booth, or an actual customer site?

The more controlled the environment, the less general the claim should be. That does not make the work weak. Constrained environments are where many robots become useful. Warehouses, factories, hospitals, greenhouses, and labs can often be redesigned around machines. The problem begins when a narrow setup is presented as proof of broad autonomy.

A taped lane on the floor is not embarrassing. It is information. A fixture that holds parts in the right orientation is not cheating. It is engineering. A bin designed for the robot’s gripper is not a failure. It may be the difference between a system that works and a system that performs intelligence for a camera. The honest question is whether the environment modification is acceptable for the use case.

In a factory, changing the fixture may be cheap compared with manual labor. In a home, asking the household to keep every object in robot-friendly positions may defeat the purpose. Evaluation begins with that context.

Autonomy has layers

When someone says a robot is autonomous, ask which layer they mean.

The robot may autonomously navigate a mapped space while a human chooses every task. It may autonomously grasp known objects while a remote operator handles exceptions. It may autonomously follow a script but require a person to reset the scene after each run. It may use an AI model to plan at a high level while lower-level control is traditional robotics. It may be autonomous only when everything goes right.

None of these layers is fake. They are just different. Navigation autonomy, manipulation autonomy, task-planning autonomy, exception-handling autonomy, and social autonomy are separate problems. A robot that is excellent at one can be weak at another.

This is why deployment language matters. “The robot can clean a floor” is different from “the robot can decide which rooms need cleaning, avoid pet messes, empty itself, handle thresholds, recover from cords, respect privacy zones, and notify the owner only when useful.” The first claim describes a task. The second describes a service.

Most real robots need supervision. The important question is not whether supervision exists. It is what kind, how often, how expensive, and how gracefully the robot fails when supervision is unavailable.

Speed is not just speed

Robot speed is easy to misread. A slow robot can look careful and intelligent in a video. In a deployment, slow may mean the economics do not work. A fast robot can look impressive until you ask about stopping distance, payload, injury risk, object damage, and error recovery.

Useful speed depends on the job. A hospital delivery robot does not need to sprint if it reliably moves supplies without blocking hallways. A warehouse picker may need a throughput target to justify its cost. A home assistant may be acceptable at human-slow speeds if it performs tasks while nobody is waiting. A collaborative robot near people may need to move slower for safety.

The real measure is not the peak motion in the clip. It is cycle time across a full task. How long from request to completed job? How much time is spent perceiving, planning, moving, retrying, charging, waiting for network responses, or asking for help? How often does a human have to step in? A demo that shows only the exciting motion may hide the quiet delays that decide whether the robot is useful.

Failure behavior is the product

A robot’s failure behavior tells you more than its best success.

When the robot cannot identify an object, does it guess, stop, ask, or try a safer alternate action? When a person steps into its path, does it slow, reroute, freeze, or become unpredictable? When a grasp fails, does it damage the object, drop it, retry sensibly, or trap itself in a loop? When the network connection fails, does the robot maintain a safe state? When the battery runs low, does it finish recklessly or return to charge?

These questions sound less glamorous than asking whether a robot can “think.” They are more important. A robot that knows how to stop well is often more deployable than a robot that can perform one impressive task but has no mature recovery behavior.

Safety is part of this. Emergency stops, speed limits, force limits, protected zones, sensors, operator training, and risk assessments are not accessories. They are evidence that the team understands the machine as a physical actor. In robotics, safety is not something added after intelligence. It is one of the conditions that makes intelligence usable.

Teleoperation is not a scandal, but hiding it is

Many robots use teleoperation somewhere in development, testing, data collection, or commercial service. That is not inherently bad. A remote human can help gather training data, rescue edge cases, approve sensitive actions, or make a system useful before full autonomy is ready.

The problem is pretending teleoperation is not present when it is central to the result. A robot that works because a remote operator handles every hard decision is a different product from a robot that asks for help once in a while. A company can still build value with human-in-the-loop robotics, but the economics, staffing, latency, privacy, and reliability story must be honest.

When you watch a demo, look for clues. Does the robot pause in ways that suggest external approval? Are there off-camera operators? Are actions too semantically convenient for the visible perception system? Is the demo described as autonomous in a precise way, or only in marketing language?

Again, the right posture is not accusation. It is asking where the human is in the loop.

The pilot is the real exam

A robot becomes interesting when it leaves the lab and enters a pilot with real constraints. The pilot does not need to be huge, but it should reveal the operational story. Who unboxes and installs the robot? Who maps the space? Who trains staff? Who handles exceptions? Who cleans sensors? Who updates software? Who owns liability? What happens at night? What data leaves the site? What counts as success?

A good pilot defines the job narrowly enough to measure it. It does not say, “help in the warehouse.” It says, “move these totes from this zone to that zone during these hours with this maximum wait time and this safety protocol.” It does not say, “assist at home.” It says, “vacuum these rooms, avoid these areas, notify on these events, and recover from these common obstacles.”

The narrower statement is not less ambitious. It is how real deployment begins.

How to watch without being fooled

The best viewer gives credit for real progress while refusing to let a clip carry more meaning than it can bear. Ask what the robot did, how often it did it, under what conditions, with what supervision, at what speed, with what failure behavior, and at what cost. Ask what changed in the environment to make success possible. Ask whether those changes are acceptable.

That habit makes robotics more exciting, not less. It lets you see the actual engineering: the careful boundary-setting, the dull reliability work, the safety cases, the fixtures, the fleet tools, the maintenance routines, the operator interfaces, and the long climb from a successful moment to a useful machine.

A good demo says, “Here is what may be possible.” A deployment says, “Here is what works when the room stops helping.”

Learn to tell them apart, and the field becomes much clearer.