The robot’s most valuable part may not be the arm, the battery, the camera, or the model. It may be the record of what happened when the machine tried to do real work.

Physical AI learns from contact with a world that does not simplify itself for software. The cup slips because the gripper pads are dusty. The cardboard box bows under pressure. The mobile base hesitates near a glass wall. A human blocks the aisle for a moment, then moves away. A cable appears on the floor after lunch. Each moment can become useful data, but only if the system records enough context to explain what happened.

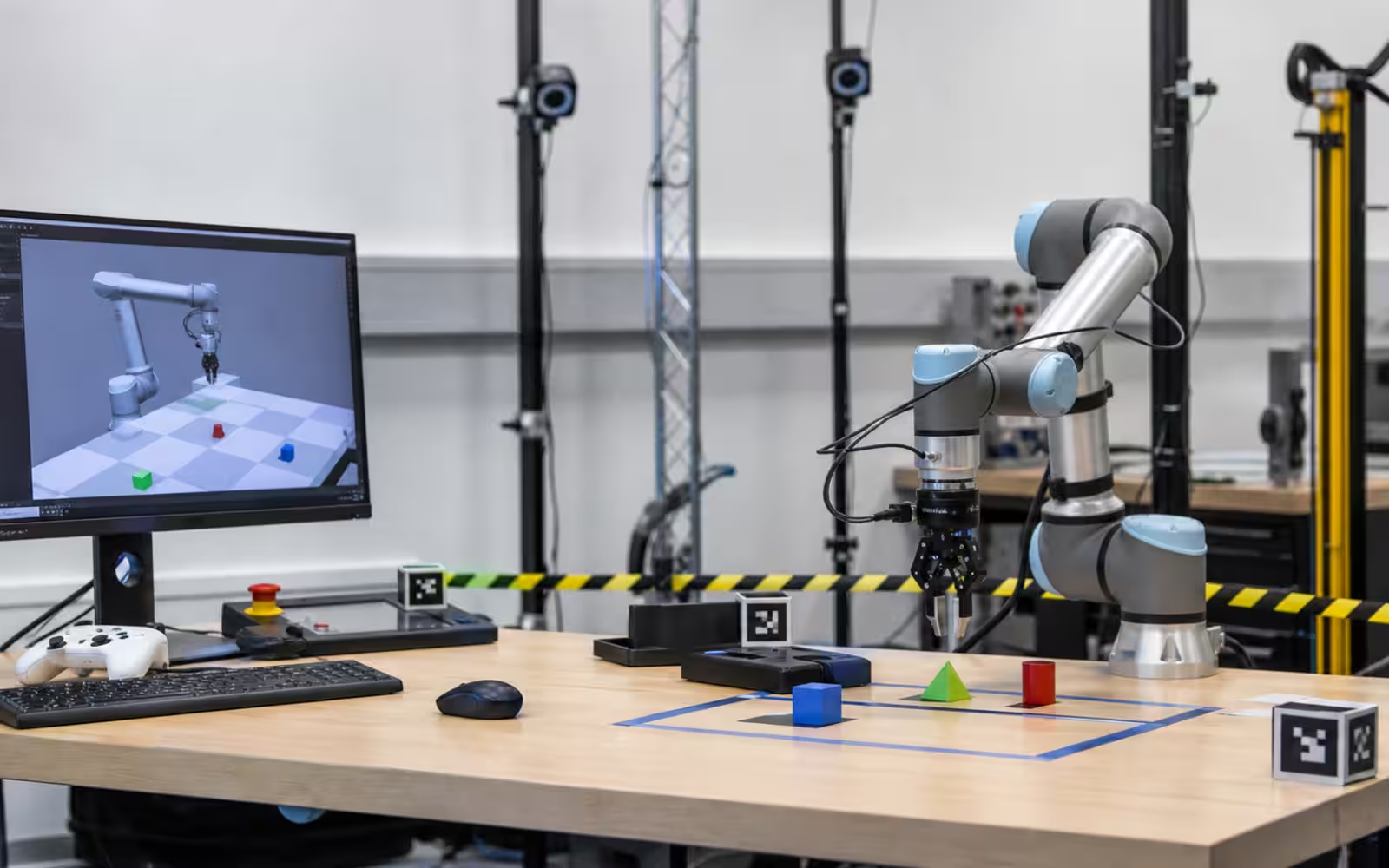

This is why robot data collection deserves its own discipline. It is not only a camera pointed at a robot. It is the practice of capturing synchronized sensor streams, commands, outcomes, human corrections, environment notes, calibration state, and failure details so that the next version of the robot has a better chance of acting well. The ideas in Embodied AI depend on that record. So do better demos, safer deployments, and more honest claims about autonomy.

Data Is Not Just Video

A video of a robot picking up an object can be useful for a viewer, but it is a thin source of learning for the robot team. The robot needs the measurements behind the scene. It may need RGB frames, depth maps, lidar scans, joint positions, gripper width, force readings, tactile signals, wheel odometry, motor currents, battery state, timestamps, control commands, map coordinates, and logs from the autonomy system. It also needs to know what task it was trying to complete and what counted as success.

The difference matters. A robot arm that misses a bottle by two centimeters did not simply “fail.” The perception system may have estimated the bottle pose incorrectly. The camera may have been bumped. The grasp planner may have chosen a collision-free path that left no margin for the bottle’s curved surface. The controller may have lagged. The bottle may have been wet. The operator may have changed the object after the task began. A useful dataset gives engineers a way to separate these causes.

This is where Robot Perception and data collection meet. Perception turns measurements into beliefs about the scene. Data collection preserves the measurements, the beliefs, and the consequences of acting on them. Without that chain, a team may know that a failure happened without knowing what the robot thought was true at the moment it moved.

Why Physical Data Is Expensive

Internet-scale data can be copied cheaply. Robot data is slow because it must be lived by a machine. A mobile robot has to drive through an actual hallway. A hand has to close around an actual object. A humanoid has to balance on actual feet. When the attempt fails, someone may need to reset the scene, inspect the hardware, replace a damaged prop, clean a sensor, or make sure the area is safe before another trial begins.

The cost is not only time. Good robot data requires calibration, maintenance, and human judgment. The sensors must be aligned. The clocks must agree. The task must be defined consistently. The scene must be varied enough to matter, but controlled enough that results can be interpreted. If a team records thousands of trials with a loose camera mount, it may have gathered a large dataset that teaches the wrong lesson.

This makes “more data” a weaker goal than it sounds. Better data often means data with known conditions, useful variation, clear failure labels, reliable timing, and enough metadata to replay what happened. A smaller set of carefully collected trials can be more useful than a larger archive that nobody trusts. Robotics rewards dull recordkeeping because dull recordkeeping is what lets a team compare one system version with another.

Teleoperation Is Also Instrumentation

Teleoperation is often discussed as a workaround for incomplete autonomy. That is one role, and Robot Teleoperation explains why human supervision remains common in useful systems. Teleoperation is also a data instrument. A human operator can show the robot how a task can be solved, rescue the robot from edge cases, and create examples of judgment that are hard to script.

The best teleoperation data captures more than the final motion. It records what the operator saw, what control mode was used, where latency appeared, when the operator hesitated, what the robot was allowed to do on its own, and which parts required human correction. If the operator takes over every time the gripper approaches a shiny object, that pattern is valuable. It says something about perception uncertainty, grasp planning, or operator trust.

Human demonstrations are not perfect truth. Operators develop habits. They may overfit to one workstation, one camera view, or one robot body. They may avoid difficult cases that an autonomous system will eventually face. Their corrections still matter, but the team has to treat them as examples produced under specific conditions, not as universal instructions. The robot is learning from people, hardware, and environment at once.

Failures Are The Richest Part

A clean success can be less informative than a messy failure. Success confirms that one path worked under one set of conditions. Failure exposes the boundary of the robot’s capability envelope. It shows the object that confused the detector, the floor condition that broke localization, the timing delay that ruined a handoff, or the social situation where the robot should have paused sooner.

This is why a mature robotics team does not only save highlight runs. It saves misses, timeouts, near misses, aborted tasks, operator interventions, protective stops, repeated retries, and cases where the robot did the right thing for the wrong reason. These records can be uncomfortable because they make weakness visible. They are also the raw material of progress.

Failure data needs careful language. “Bad grasp” is less useful than a record that distinguishes poor pose estimate, unreachable approach, wrong gripper force, object deformation, slippery surface, occlusion, or post-grasp collision. The labels do not have to be perfect on the first pass, but they should become more specific as the team learns which failures repeat. A vague archive becomes a junk drawer. A well-described archive becomes a map of the next engineering work.

The habit is close to the one in Robot Demo Evaluation . The important question is not whether a robot succeeded once. It is what happened across attempts, what failed, what supervision was present, and how the system behaved when the room stopped helping.

Time Is The Hidden Schema

Robot data is temporal. A camera frame, a depth image, a force spike, a motor command, and a map update only make sense when their timing is known. If the system cannot say which observation caused which action, learning becomes guesswork. A half-second delay can make a good policy look bad or a bad policy look plausible.

Timing also matters because robots act through feedback. A mobile robot does not simply choose a route and vanish into the route. It senses, updates, adjusts speed, avoids obstacles, and reacts to people. A robot arm does not simply choose a grasp and teleport to the object. It moves through a trajectory, observes contact, adjusts force, and verifies placement. The data record needs to preserve that sequence.

This is one reason logs from the autonomy stack are so important. A high-level task planner may say “pick the red cup,” while a motion planner chooses a path, a controller sends joint commands, and a safety layer limits speed. If a failure occurs, the team needs to know which layer made the decisive choice and which layer had enough information to act differently.

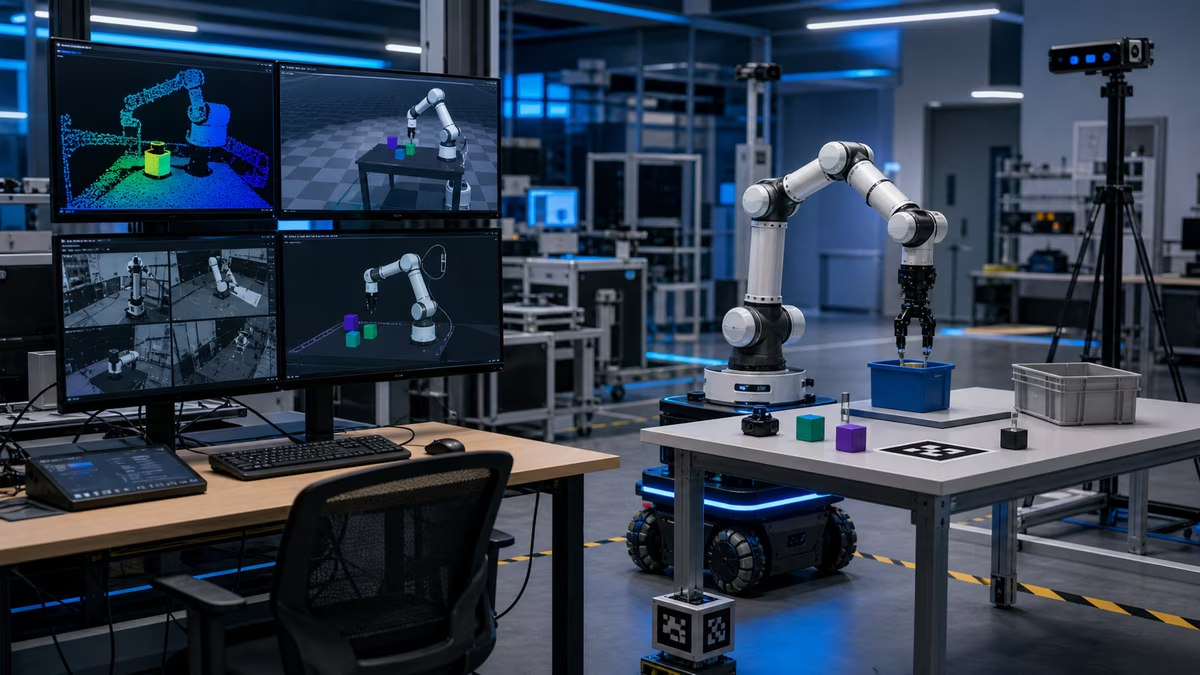

Replay Before Retraining

The tempting response to a pile of robot data is to train a larger model. Sometimes that is appropriate. Often the first step should be replay. Can the team reconstruct what the robot saw? Can they run the same perception model again and get the same belief? Can they test a new planner against old scenes without risking hardware? Can they inspect the moment where a human took over and see what changed?

Replay turns data into an engineering tool. It lets a team compare versions, reproduce bugs, and check whether a proposed fix actually addresses the failure that motivated it. It also prevents a common mistake: treating every weakness as a machine learning problem. A replay may reveal that the model was fine but the camera transform was stale, the gripper pads were worn, the task definition was ambiguous, or the workspace lighting changed.

Good replay systems are not glamorous, but they change the pace of robotics work. They let engineers spend scarce robot time on the cases that cannot be answered offline. They also make safety reviews more concrete because the team can inspect what the robot believed before a risky motion, not only what an observer saw from outside.

Simulation Needs Real Corrections

Simulation can multiply experience, but it needs real data to stay honest. Sim-to-Real Robot Learning is partly a story about disagreement between virtual practice and physical consequence. Robot data collection gives that disagreement shape. It shows which simulated assumptions were too clean, which materials behaved differently, which sensors had noise the simulator did not capture, and which edge cases were absent from training.

The useful loop is not simulation first, reality last. It is a conversation. Real failures inform the simulator. The simulator generates variations and candidate fixes. The real robot tests whether those fixes survive contact. Logs from that test update both the learned policy and the team’s understanding of the environment. Over time, the virtual world becomes less polite because the real world keeps correcting it.

This loop works best when data collection is planned before the failure arrives. If the robot only records a low-resolution video and a success flag, the team may not know why transfer failed. If it records sensor state, commands, timing, calibration, contact, and operator intervention, the team has a chance to repair the bridge between practice and deployment.

Privacy And Consent Are Part Of The Dataset

Robot data often contains people, homes, workplaces, routines, voices, maps, inventory, labels, and operational habits. That makes collection a trust problem as well as a technical problem. A robot that moves through a space can record more than the task requires. The team should be clear about what is captured, why it is captured, how long it is retained, who can inspect it, and how sensitive material is handled.

The right approach depends on the setting, but the principle is stable: collect what the task and safety case need, protect it carefully, and avoid treating private spaces as free training material. In a lab, that may mean controlled scenes and staged objects. In a warehouse, it may mean access controls, retention rules, and attention to employee visibility. In a home, it may mean stricter limits because the robot is operating in intimate space.

Good data governance does not slow physical AI because it is separate from the work. It supports the work by making collection repeatable, reviewable, and acceptable to the people living or working around the machine.

What Better Data Changes

Better robot data does not make robotics easy. It makes the difficulty legible. It shows which objects are still out of reach, which environments are too variable, which interventions happen too often, which failures are rare but severe, and which parts of the system improve when a model changes. It lets a team say less about what the robot seems to do and more about what it has done across conditions.

That is the practical promise. Physical AI will not become reliable because one model is impressive in isolation. It will become reliable where hardware, software, operations, supervision, safety, and data collection form a loop that keeps telling the truth. The robot acts. The world answers. The system records the answer clearly enough that the next action can be better.