Embodied AI is the idea that intelligence changes when it has a body.

A chatbot can answer a question without touching the world. A robot has to perceive a scene, choose an action, move through physics, and live with the result. The cup slips. The floor reflects. The door is heavier than expected. The object is behind another object. The human steps into the path. The robot has to notice, adapt, and stay safe.

That is the embodied part.

What embodied AI includes

Embodied AI sits at the intersection of:

- perception

- language understanding

- spatial reasoning

- motion planning

- control

- tactile sensing

- simulation

- reinforcement learning

- imitation learning

- safety constraints

- robot hardware

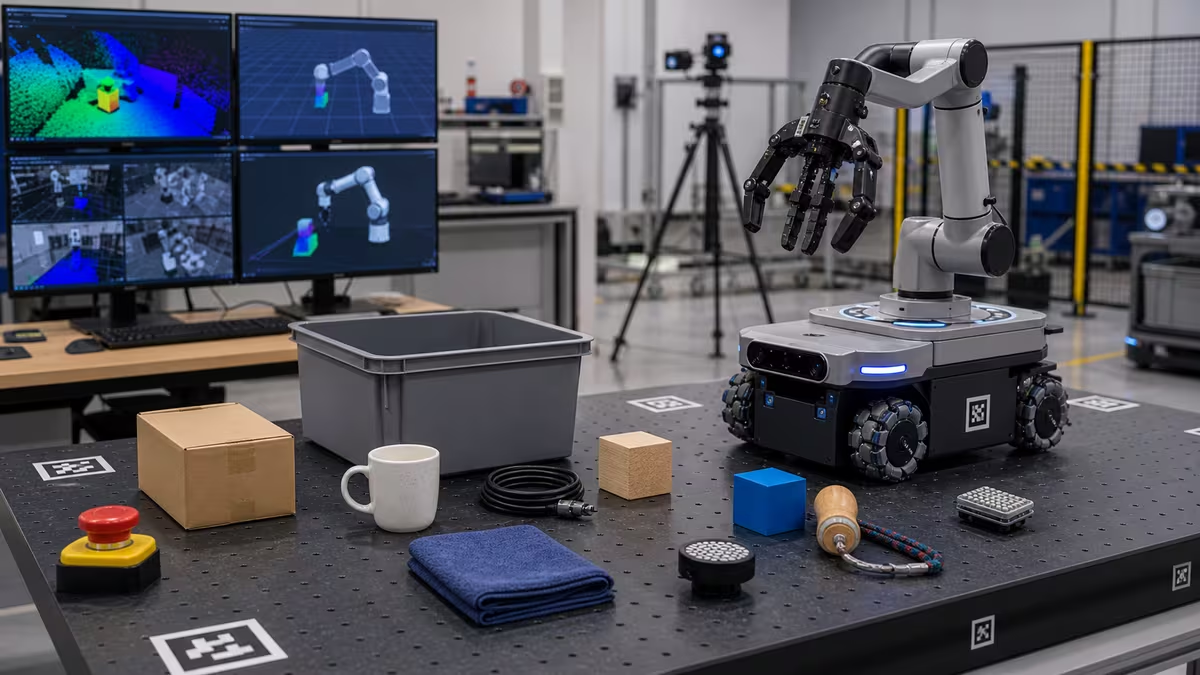

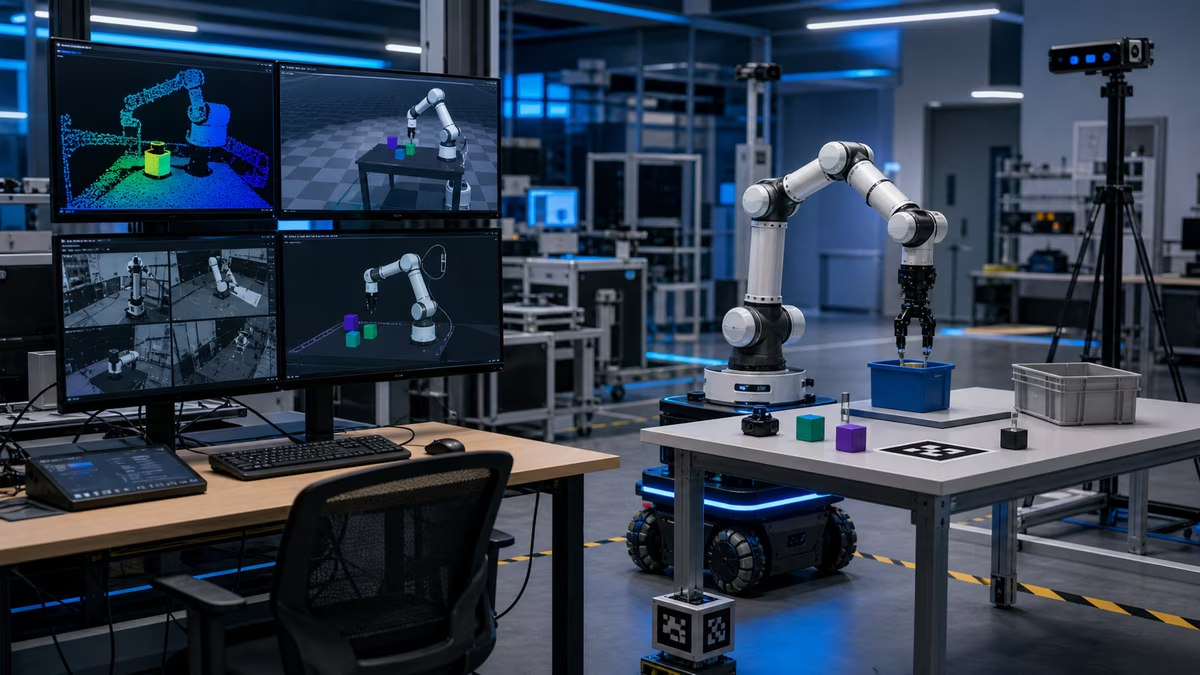

The model is only one part. A robot also needs sensors, actuators, calibration, timing, controllers, maps, task definitions, and fallback behavior.

Why physical data is different

Internet text is abundant. Good robot data is expensive.

Robot data may include camera feeds, depth images, joint positions, forces, gripper states, tactile readings, commands, failures, human corrections, and environment metadata. Collecting it requires hardware, time, supervision, and safety. A failed attempt may break an object or interrupt a facility.

That makes data quality central.

Useful robot datasets need:

- clear task definitions

- synchronized sensor streams

- action labels

- success and failure outcomes

- object variety

- environment variety

- safety annotations

- calibration records

From language to action

A useful embodied system often has several layers.

Task interpretation

The robot turns a human request into a goal. “Bring me the red mug” becomes a search and manipulation problem.

Scene understanding

The robot identifies objects, locations, obstacles, people, and possible interaction points.

Skill selection

The system chooses a skill: navigate, reach, grasp, open, pour, scan, push, pull, or ask for help.

Motion and control

Low-level controllers execute movements while respecting limits, contact, balance, and safety.

Feedback and recovery

The robot checks whether the action worked. If it failed, it retries, changes strategy, asks for help, or stops.

Foundation models for robots

Robot foundation models try to generalize across tasks, robots, and environments. They may connect language, images, video, and robot actions so a robot can learn skills from broader data.

The promise is real: fewer hand-coded behaviors, better generalization, and easier instruction.

The hard part is grounding. A phrase like “carefully place the glass on the counter” hides many physical details: grip force, orientation, path, surface friction, collision avoidance, and what “carefully” means near a person.

Simulation helps, but does not erase reality

Simulation is useful because it lets researchers generate many trials, test policies, vary scenes, and train without breaking hardware.

But simulation has a gap:

- friction differs

- lighting differs

- sensors have noise

- objects deform

- contact physics is hard

- real motors heat and wear

- people behave unpredictably

Good sim-to-real work narrows the gap. It does not pretend the gap is gone.

Teleoperation and human demonstrations

Many robot learning systems begin with human demonstrations. A person teleoperates the robot or records actions, and the model learns patterns.

This can be powerful because humans provide common sense and recovery behavior. It also creates questions:

- Are demonstrations diverse enough?

- Do they include failures?

- Can the robot exceed the demonstrator?

- Does the policy know when it is outside training?

- Can the system explain uncertainty?

Evaluation questions

Embodied AI should be evaluated on more than one successful video.

Ask:

- How many trials were run?

- What was the success rate?

- What objects and environments were excluded?

- Were failures counted?

- Was there teleoperation?

- Did the robot recover without help?

- How did it handle people entering the scene?

- Did it damage objects?

- What safety constraints were active?

Practical use cases

Embodied AI is especially useful when a robot needs flexibility inside a bounded job:

- picking mixed goods from bins

- learning new warehouse SKUs

- following natural-language work instructions

- mobile inspection with anomaly detection

- household object search

- service robot navigation and interaction

- flexible manufacturing tasks

The sweet spot is not “do anything.” It is “adapt better within a known domain.”

Risks to watch

- Overgeneralization: the robot treats a new situation as if it were familiar.

- Hidden teleoperation: autonomy is overstated.

- Weak recovery: the robot can act but cannot gracefully fail.

- Unsafe language obedience: the robot follows a command that conflicts with physical safety.

- Data leakage: cameras and maps collect sensitive information.

- Benchmark theater: tests reward narrow demos rather than deployment quality.

Next steps

Read Robot Autonomy for the full stack that wraps an embodied model, then What Robots Can Actually Do to keep the capability envelope honest.