Robot autonomy is not one switch.

A robot can be autonomous in navigation but not manipulation. It can plan routes but need help with blocked doors. It can pick known objects but fail on new packaging. It can run all day in a mapped warehouse and be useless in a cluttered home.

Autonomy is a stack.

The autonomy layers

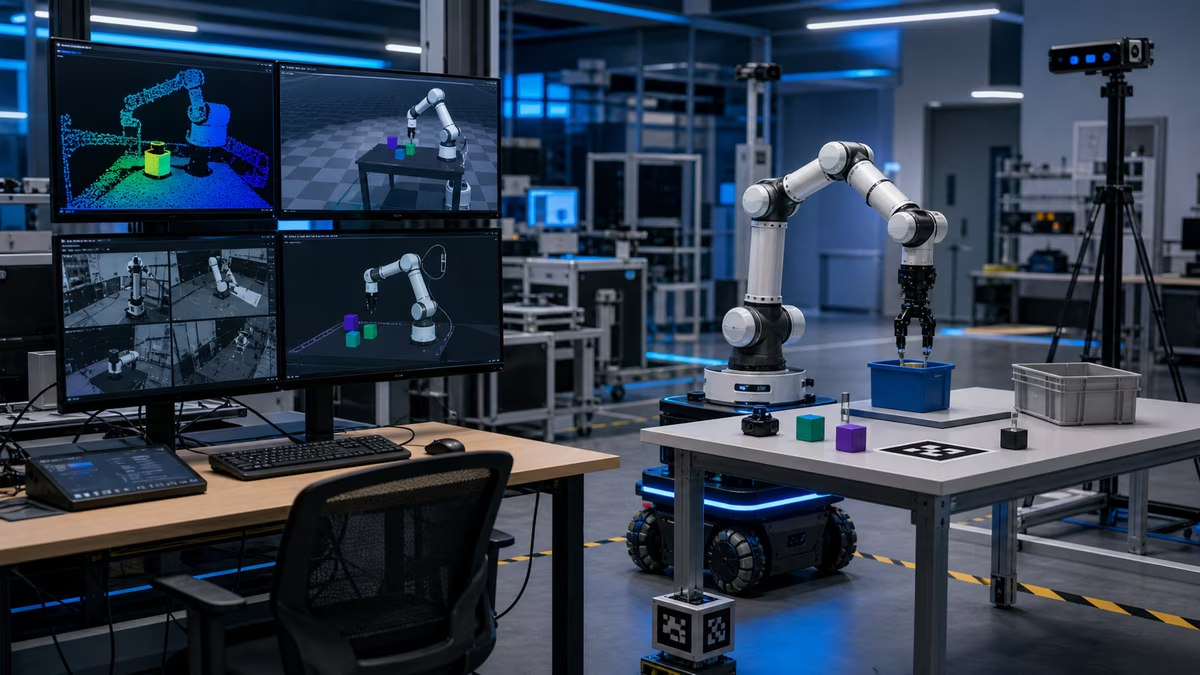

Sensors

Robots sense with cameras, depth cameras, lidar, radar, ultrasonic sensors, encoders, IMUs, force sensors, tactile sensors, microphones, and other instruments. Each sensor has blind spots. Cameras struggle with glare and darkness. Lidar may struggle with glass. Force sensors detect contact only after contact happens.

Localization

The robot estimates where it is. Indoors, this can involve SLAM, fiducial markers, wheel odometry, inertial measurement, beacons, or maps. Bad localization makes every downstream decision worse.

Mapping

The robot needs a representation of space: walls, shelves, no-go zones, doors, workstations, chargers, humans, temporary obstacles, and semantic labels.

Perception

Perception identifies objects, people, signs, handles, labels, surfaces, hazards, and affordances. It answers “what is around me?”

Planning

Planning chooses what to do: route, arm motion, grasp, task sequence, charging schedule, or next inspection point.

Control

Control turns plans into motor commands. It keeps wheels, joints, grippers, and balance inside safe limits while reacting to feedback.

Safety layer

Safety monitors speed, force, zones, emergency stops, people, payloads, faults, and restricted actions. It should not depend only on the highest-level AI model behaving well.

Supervision

Supervision can be a human operator, remote support team, fleet manager, escalation policy, or approval gate. Good autonomy knows when to ask for help.

Degrees of autonomy

Use precise labels:

| Type | Meaning |

|---|---|

| Manual | A person controls the robot directly |

| Teleoperated | A person controls remotely |

| Assisted | Robot stabilizes or protects parts of the action |

| Scripted | Robot follows prebuilt routines |

| Semi-autonomous | Robot acts in a bounded task with human support |

| Autonomous navigation | Robot routes itself through a space |

| Autonomous manipulation | Robot handles objects without direct control |

| Fleet autonomy | Many robots coordinate jobs and charging |

| Open-ended autonomy | Robot handles broad unfamiliar tasks |

Most real systems combine several of these.

Fallback behavior

The fallback is where autonomy becomes trustworthy.

Bad fallback: keep trying, block an aisle, drop the object, or guess.

Good fallback:

- slow down

- stop safely

- preserve the object

- move to a safe pose

- mark the location

- ask for help

- provide a clear error reason

- log sensor data for review

If a robot has no good fallback, its autonomy is brittle.

The role of maps

Maps can make robots much more reliable. They can also become stale.

A warehouse map changes when racks move, doors close, pallets appear, floor markings change, or construction begins. A home map changes when furniture moves, rugs shift, toys appear, or a door is left open.

A map is not a guarantee. It is a hypothesis that must be updated.

Human-in-the-loop design

Human support is not failure. It is often the best way to make a system useful.

Good human-in-the-loop design defines:

- which events require help

- how the robot asks

- what information the human sees

- what actions the human may take

- how the system learns from interventions

- when the robot must stop instead of asking

The goal is not zero human involvement on day one. The goal is fewer, clearer, safer interventions over time.

Autonomy evaluation

Measure:

- task success rate

- intervention rate

- mean time between interventions

- near misses

- false positives and false negatives

- recovery success

- path efficiency

- energy use

- object damage

- safety stops

- operator workload

A robot that succeeds 95 percent of the time may still be bad if the remaining 5 percent creates expensive or dangerous exceptions.

Autonomy and AI models

Large models can help with language, task planning, scene interpretation, and flexible policies. They should not be the only safety layer.

For physical systems, separate:

- intent understanding

- task planning

- motion planning

- low-level control

- safety monitoring

- policy and permissions

- audit logging

This separation makes it easier to test, constrain, and debug behavior.

Build-vs-buy checklist

Before adopting an autonomous robot system:

- Name the task boundaries.

- Name the allowed operating area.

- Name the fallback state.

- Define who supervises.

- Define what gets logged.

- Define update and maintenance responsibilities.

- Measure the baseline human workflow.

- Pilot with real exceptions, not only ideal runs.

Useful references

Next steps

Read Embodied AI for model-driven skills, then Robot Safety for the constraints that must wrap any autonomy layer.